Walk into a typical enterprise, in early 2026, and you’ll hear a range of opinions on what 3D is supposed to do, and how successfully it’s doing it.

From reducing samples and cutting time to market, to overhauling the way brands and retailers think about content creation for downstream audiences, or slashing return rates, digital product creation has been applied to a very broad canvas of business cases over the last decade, with a lot of different metrics for success. And when we take that wide enterprise lens, the result of the same DPC tools and talent being pulled in so many different directions is a very uneven set of outcomes that varies considerably company-to-company.

All of which means that the enterprise discussion around DPC is prone to giving you whiplash. You can talk to one brand and come away with the feeling that DPC has fundamentally changed the way they design products and create patterns, the way they collaborate and communicate with upstream partners, the way they advance their sustainability mission, or the way they sell to consumers or retailers. Then you can talk to another company and be told that 3D under-delivered on its promises, and that they fell back into old patterns and needed to rely again on physical-first workflows.

Focus in on the design and development community, though, and the sentiment is pretty much universal: 3D is uniquely capable of being both a full-featured toolset for creative expression and technical engineering, and a capsule for carrying as much original intent and context as possible through the commercialisation journey. In a very real sense, designers working in 3D see it as a way to enhance the utility and safeguard the vision of their creations, not just as a design tool.

So what’s responsible for the discrepancy between the unanimously positive hands-on experience of the people who use 3D and the less consistent value return for the companies that have pursued 3D strategies?

Or, to put it another way, why has a fundamental, architectural change on the designer’s desk (and in the way designers are taught) not always laddered up to a bigger digital transformation for the brands they work for?

That open challenge – which often gets labelled as “scaling digital product creation,” but should probably be called “maturing DPC,” since growth is not really a necessary component of success – has been central to The Interline’s own DPC Reports for years. It was also at the heart of CLO’s Social London hosted by Epic Games, which I chaired last summer, and where I had the opportunity to bring big brand leaders on-stage to talk about managing the transition from 3D as a clear-cut value proposition in design and development, to 3D as a foundational layer and a lever for much bigger ambitions.

It’s also a question that’s clearly been swirling around the minds of DPC executives. When The Interline recently interviewed CLO Founder Jaden Oh, he captured one of the company’s top priorities as “…building the infrastructure that allows [a] rich, data-heavy 3D asset to flow frictionlessly through every stage [of the product journey], cementing its role as the true single source of truth”.

This gap between digital product creation as it exists in the minds and on the displays of real product creators, and DPC as it’s being debated as part of global transformation strategies, is also something it’s easy to spend a lot of time talking about in theory. (I know because I’ve done my share of it!) But real advancement of this agenda are more likely to come when technology providers, their partners, and the user community gather together to remind themselves of why 3D works when it’s in their hands, but also to be honest about the functional gaps that exist between their own day-to-day reality and the DPC ecosystem’s much bigger promise of changing the way the industry around them operates.

A couple of weeks ago, I went along to a gathering that was convened with exactly that objective.

On 18th February, at Epic Games’ London Innovation Lab, the European team at CLO had put together an evening agenda that included a perspective on the trajectory of their own core tools, a vision for connecting those tools to the real-time ecosystem built by Epic Games (exhaustively detailed in The Interline and Epic’s “Real-Time Roadmap” from last summer), and a spotlight on the workflows and priorities of both independent artists and the creative teams behind some of the UK’s most storied brands.

For everyone here at The Interline, the independent creator angle struck first – simply because the headline artwork for the event was adapted from the work Blockschmidt designed for our DPC Report 2026. As interesting as it was to see those designs brought to life on large-format displays, though, the event also made extensive use of Epic’s markerless motion capture space and allowed attendees to puppet the Metahuman used in that cover, in real-time, and to move around the environment that made up the background of the static images that readers will have seen in the report PDF and here on our website.

As a tangible demonstration of how seamlessly the same digital assets can make the journey from offline visualisation to real-time experiences, this was instructive as well as being immersive – and there’s little doubt that high-quality 3D design work, at the garment, avatar, and environment levels, can translate into impactful statements, installations, and experiences elsewhere.

But that same journey – from the designer’s moodboard to static or motion graphics intended for an end audience – also needs to go through a lot of other stage gates, and touch a lot of other processes, if a 3D representation of a garment is going to become a more complete “source of truth” and traverse the gaps that still feel as though they’re restricting the potential of DPC workflows.

Not coincidentally, those gaps were front and centre in the keynote presentation given by Bryan Kim, Product Manager at CLO, on the night. On-stage, Bryan made two essential cases: that the path from design to virtually any use case for a digital asset should leave no data behind, and that the elements of design-to-production workflows that feel like administrative burdens today should be shouldered by AI in the near future.

The latter we’ll have an opportunity to tackle in depth in The Interline’s AI Report 2026, this spring. The former, though, is about, as Kim put it, “making sure that what you visualise for the market is exactly what you can produce on the factory floor,” and “giving fashion designers the ability to see, test, and iterate on their creations in a seamless and agile way”.

Which, written down here, in isolation, reads like a reiteration of the same central promise that 3D designers have long believed in, and that businesses have long struggled to realise. But the event itself (and the product and partnership roadmap that influenced it) did a decent job of demonstrating that this vision is now being backed by measurable progress when it comes to knitting together the different elements, and the different solutions, that will need to combine to make up a more complete ecosystem.

To wit: the same integrations that made it possible for the garments and the MetaHuman avatar from the front page of The DPC Report 2026 to be piloted through real-time environments are now promised to be ready for deployment in ways that unify other parts of the fashion and footwear product lifecycle that have stayed stubbornly disconnected.

“It’s not about making [3D assets] look pretty,” Kim said at the event. “It’s about ensuring that every fold and every seam in a high-end visualisation is backed by production-ready data.”

That data – the same foundation that Jaden Oh was referring to in his earlier interview – is something that Kim referred to multiple times as a “recipe,” or a set of essential ingredients that have to be combined, and then remain associated with one another, to produce the desired end result, whether that result is a real-time experience or a set of technical specifications.

And as much as Kim and others from the CLO team hinted at a near-term future where AI is able to assist with that journey, this concept of a “recipe” is also one he used to articulate the difference between a generated image of a garment (or a steak dinner, to use his exact analogy) and the data required to not just visualise but actually iterate on, collaborate around, and eventually assemble that garment from real materials and real sewing operations. A generated image is, in other words, a visualisation, not a product.

Without wanting to mix analogies too much, this distinction is similar to what I’ve previously described as the “holy grail” for digital product creation: a single reference point that can be used to make any creative or commercial decision, from fabric selection to a consumer’s choice to put something into a basket. Now, the jury may be out on how far that push to make 3D the sole reference frame for decision-making should go before it delivers diminishing returns (more on that in the DPC Report 2027, coming at the end of this year) but it’s still unambiguously a good thing to see different parts of the DPC ecosystem becoming better integrated in service of realising at least the most impact-heavy parts of that vision.

And that systems integration was on full display at the London event, between CLO’s own platforms (CLO itself, CONNECT, CLO-SET, and now Swatchbook) and the real-time ecosystem that’s made up of Unreal Engine, Twinmotion, and MetaHuman. CLO and Epic have been mutually-invested partners for some time, but this event was evidence that building bridges between fashion design and real-time use cases is a key priority for both companies.

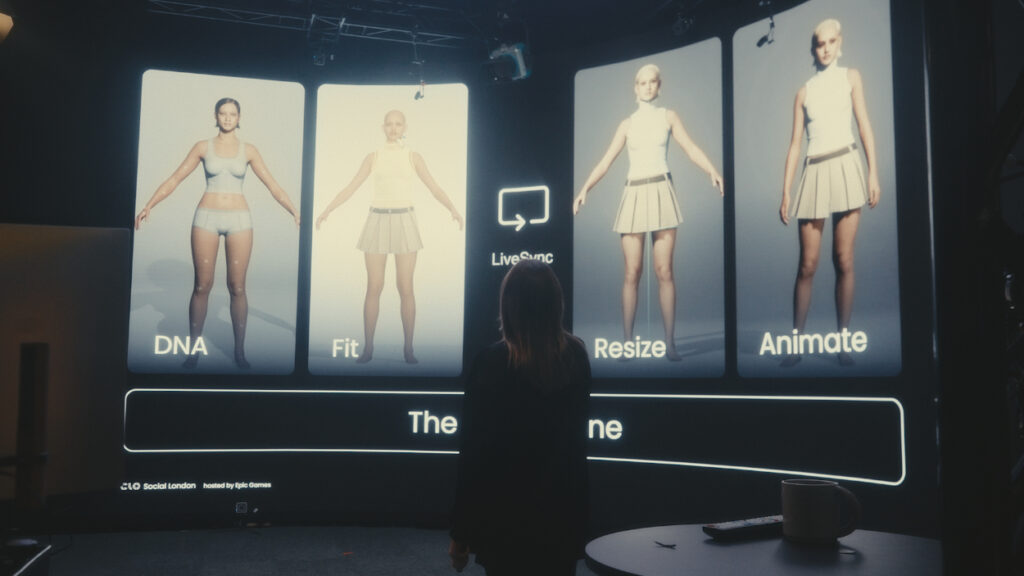

As well as the pre-existing LiveSync plugin, which is used to transfer garments and animations to Unreal Engine in real-time, the companies were now demonstrating “DNA Import,” which allows users to bring accurate MetaHuman proportions into their CLO projects, and the new “Parametric Cloth” simulation, which turns a garment into an adaptive asset upon import into Unreal Engine – something that it will automatically adjust itself to fit any avatar or character without manual re-reginning or creating garment patterns for specific body types.

This was interesting technology for real-time downstream use cases, but I do want to emphasise that it’s not to be confused with actual grading. Generally speaking, though, there’s going to be much more to analyse in this integration of garment creation and real-time tools, but for the purposes of this event the statement was clear: the same philosophy that’s behind the ease with which digital designs can make their way into novel digital applications is also driving seamless workflows that end with a physical output.

As well as reiterating this vision for deeper integration, the London event also featured a panel discussion that framed the same central idea (data integrity from initial design to any conceivable end use) as a journey of narrative consistency – making sure that the story behind a product – as expressed through 3D tools – makes its way, intact, to everywhere that product needs to travel.

This might sound a little wooly (and indeed some of the discussion about garments as time-capsules and historic signifiers was beyond my pay grade as a technology analyst for fashion, rather than someone with real fashion week bona fides) but a second glance reveals a pretty consistent philosophy: that bringing garments to life in 3D is about much more than just the way they look.

As the panel moderator, Costas Kazantis, of the Fashion Innovation Agency, put it, it’s a matter of “understanding and contextualising a garment before creating it”. Which, if I can convert that into language more familiar to me, is an artful way of capturing the principle of progressive virtualisation – the idea that creating something digitally, and then performing the stages of work that follow digitally as well, creates value at every stage at the same time as protecting original intent.

As his panel members, Blockschmidt and Kathy McGee from CSM had a lot to say about how this principle manifests itself in the way 3D is taught in institutional education, how skills are built by grassroots users, and how those technical skills and perspectives feed back into technology roadmaps.

But the speaker whose perspective I took home with me was Yao Yao, who was one of two DPC representatives from Vivienne Westwood who spoke at the event. Yao also articulated the long-term value of connecting 3D design to product development and beyond, but she captured a principle that often gets buried when the discussion turns to “scaling” – that DPC needn’t always be about speed or volume, and that the return can come from giving brands the ability to virtualise not just the initial idea, but the intensive, iterative, and thankless processes of creating, refining, and producing complex silhouettes and constructions.

Not coincidentally, the CLO team also used this event as their opportunity to launch a new design contest, in partnership with Vivienne Westwood and Epic Games. Using MetaHumans as the canvas, this competition will follow on from the partnership that CLO put together with accessory maker Coach last year, and will open up to designers in the second quarter of this year.

The Interline will be interested to see how this competition progresses, not just as observers of the way independent creators are pushing the frontiers of pipeline and workflow development (as detailed in our interview with Blockschmidt) but because it’s also a prime example of an exercise that feels creative and visual at first glance, but that actually has deep hooks into the way products are actually built, and how they make it to market.

This competition is also emblematic of my major takeaway from this event: that technology vendors can undertake all the research, development, and integration in the world, but that it remains end users who drive uptake and transformation. And if that adoption successfully translates into more virtualisation over time, then we’ll see greater alignment between the value that creative professionals get from DPC – and that brings them to events like this one – and the ambitions that enterprises have for the future.